Hire The Best PySpark Tutor

Top Tutors, Top Grades. Without The Stress!

52,000+ Happy Students From Various Universities

How Much For Private 1:1 Tutoring & Hw Help?

Private 1:1 Tutoring and HW help Cost $20 – 35 per hour* on average.

Your PySpark job is failing at 3 AM and the error trace means nothing. That’s exactly when MEB tutors are available.

PySpark Tutor Online

PySpark is the Python API for Apache Spark, enabling distributed data processing across large-scale datasets. It equips practitioners with tools for batch processing, streaming, machine learning pipelines, and SQL analytics at production scale.

Finding a qualified PySpark tutor online is harder than finding a Python or SQL tutor — the skill set is genuinely niche. MEB covers it as part of a full data science tutoring programme, with tutors who have hands-on Spark cluster experience, not just textbook familiarity. If you’ve searched for a PySpark tutor near me and found nothing local, online is the better option anyway — whiteboard tools and screen sharing work well for debugging DataFrame transformations and DAG optimisation. You understand the logic before you write a single line of production code.

- 1:1 online sessions tailored to your course syllabus or workplace project

- Expert-verified tutors with real distributed computing experience

- Flexible time zones — US, UK, Canada, Australia, Gulf

- Structured learning plan built after a diagnostic session

- Guided project support — we explain the logic, you build and submit

52,000+ students across the US, UK, Canada, Australia, and the Gulf have used MEB since 2008 — including students in Data Science subjects like PySpark, Big Data, and Data Analysis.

Source: My Engineering Buddy, 2008–2025.

How Much Does a PySpark Tutor Cost?

Most PySpark tutoring sessions run $20–$40/hr. Graduate-level or highly specialised topics — Spark internals, custom partitioning strategies, ML pipeline tuning — can reach $100/hr. Start with the $1 trial: 30 minutes of live tutoring or one full problem explained in detail.

| Level / Need | Typical Rate | What’s Included |

|---|---|---|

| Standard (most course levels) | $20–$40/hr | 1:1 sessions, project guidance, code review |

| Advanced / Specialist | $40–$100/hr | Spark internals, ML pipelines, cluster optimisation |

| $1 Trial | $1 flat | 30 min live session or one full question explained |

Tutor availability tightens around university project submission windows and professional certification exam periods. Book early if you have a hard deadline.

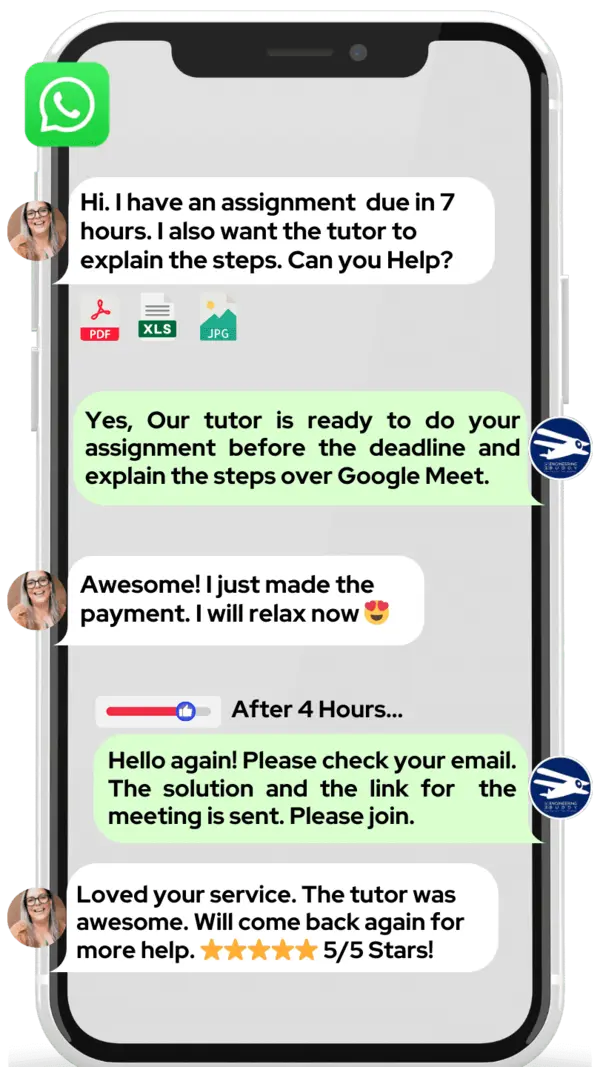

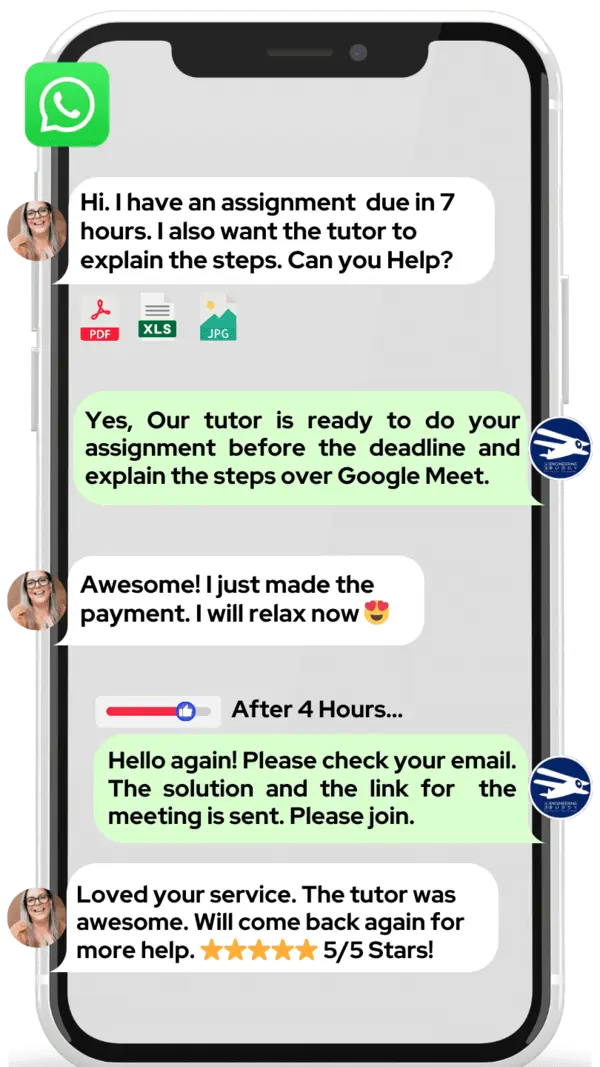

WhatsApp MEB for a quick quote — average response time under 1 minute.

Who This PySpark Tutoring Is For

Most students who reach MEB for PySpark help are not complete beginners. They’ve written Python, run some SQL, maybe even touched Pandas. The gap is the distributed computing layer — partitioning, shuffles, lazy evaluation, Spark execution plans — and that gap tends to surface at the worst possible moment.

- Masters and PhD students running large-scale data pipelines for their thesis work

- Undergraduate students in computer science or data engineering programmes at universities like MIT, Carnegie Mellon, University of Toronto, Imperial College London, and ETH Zurich

- Working professionals preparing for Databricks Certified Associate Developer or similar Spark certifications

- Students with a project submission deadline approaching and significant gaps still to close

- Engineers transitioning from pandas-based workflows to distributed Spark environments

- Data mining and analytics students whose coursework has scaled beyond single-machine tools

Need help with artificial intelligence or ML pipeline integration alongside your PySpark work? MEB tutors can cover both in the same session. Try it for $1 before committing to a longer block.

1:1 Tutoring vs Self-Study vs AI vs YouTube vs Online Courses

Self-study works if you’re disciplined and the docs are enough — PySpark’s official documentation is thorough, but it won’t tell you why your job is OOMing on a 32-node cluster. AI tools explain syntax fast but can’t watch you build an execution plan and catch the skew partition you’re missing. YouTube covers RDDs and DataFrames at a conceptual level; it stops when your specific transformation logic breaks. Online courses follow a fixed path — useful for foundations, useless when you’re three weeks from a deadline with a specific blocker. A 1:1 online PySpark tutor works through your actual code, your actual error, in real time.

Outcomes: What You’ll Be Able To Do in PySpark

After working with an MEB tutor, you’ll be able to write and optimise DataFrame transformations without triggering unnecessary shuffles, apply window functions and aggregations across partitioned datasets, build and tune Spark ML pipelines using MLlib, explain Spark’s lazy evaluation model and DAG execution to a technical interviewer, and diagnose job failures from the Spark UI rather than trial-and-error log scanning. These aren’t abstract skills — they’re the exact capabilities that show up in capstone project rubrics and certification exams.

Based on feedback from 40,000+ sessions collected by MEB from 2022 to 2025, 58% of students improved by one full grade after approximately 20 hours of 1:1 tutoring in subjects like PySpark. A further 23% achieved at least a half-grade improvement.

Source: MEB session feedback data, 2022–2025.

At MEB, we’ve found that PySpark students who struggle with performance issues almost always have the same root problem: they’re thinking in pandas and writing Spark. One session on the execution model changes everything that follows.

What We Cover in PySpark (Syllabus / Topics)

Core PySpark and DataFrame API

- SparkSession setup, SparkContext, and application configuration

- RDD operations vs DataFrame API — when to use which

- Schema inference, StructType, and explicit schema definition

- Transformations vs actions — lazy evaluation and the DAG model

- Joins, unions, and handling data skew in wide transformations

- Window functions: rank, row_number, lag, lead across partitions

- User-defined functions (UDFs) and pandas UDFs for vectorised operations

Core references: Learning Spark (Damji et al., O’Reilly), Spark: The Definitive Guide (Chambers & Zaharia, O’Reilly).

Spark SQL and Data Engineering Pipelines

- Writing and optimising Spark SQL queries against registered temp views

- Reading and writing Parquet, Delta, JSON, and CSV at scale

- Partitioning strategies — static, dynamic, bucket partitioning

- Caching, persistence levels, and broadcast joins

- Structured Streaming: sources, sinks, watermarking, trigger modes

- Delta Lake basics: ACID transactions, time travel, MERGE operations

References: Delta Lake: The Definitive Guide (Armbrust et al., O’Reilly), official arXiv computer science preprints on distributed query optimisation.

MLlib and Machine Learning Pipelines

- Feature engineering with VectorAssembler, StringIndexer, OneHotEncoder

- Pipeline API: stages, fit, transform, and cross-validation

- Classification and regression with MLlib estimators

- Hyperparameter tuning with CrossValidator and ParamGridBuilder

- Model persistence — saving and loading PipelineModels

- Integration with NumPy and Pandas for pre- and post-processing

References: Machine Learning with Spark (Pentreath, Packt), Spark: The Definitive Guide.

Platforms, Tools & Textbooks We Support

PySpark runs across several environments and MEB tutors are comfortable in all of them. Sessions adapt to wherever your code lives.

- Databricks Community Edition and Databricks Workspace

- Apache Spark on local standalone mode and YARN/Kubernetes clusters

- Google Colab and Jupyter Notebooks with PySpark kernel

- AWS EMR, Azure HDInsight, Google Cloud Dataproc

- Delta Lake and Apache Iceberg table formats

- VS Code with PySpark extensions and IntelliJ for Scala interop

What a Typical PySpark Session Looks Like

The tutor opens by checking where you left off — usually a specific transformation, a failing job, or an optimisation task from the previous session. You share your screen or paste the notebook into the shared workspace. The tutor reads your execution plan, points to the shuffle or the Cartesian product you didn’t see, and walks through why it’s happening. You fix it together. Then you write the corrected version yourself while the tutor watches. The session closes with one concrete task — rewrite the skewed join using broadcast, or implement watermarking on the streaming source — and a note on what comes next: usually Spark UI profiling or MLlib pipeline structure.

How MEB Tutors Help You with PySpark (The Learning Loop)

Diagnose: In the first session, the tutor identifies whether your issue is conceptual (you don’t understand lazy evaluation), syntactic (you’re chaining transformations that trigger multiple scans), or architectural (your partitioning strategy is wrong for your join pattern). These are different problems with different fixes.

Explain: The tutor works through a live example using a digital pen-pad — annotating the DAG, drawing the shuffle boundary, showing exactly where data moves across the network. You see the logic, not just the answer.

Practice: You write the solution yourself. The tutor watches and says nothing until you’re done or genuinely stuck. This is the part that builds retention.

Feedback: The tutor goes through every error — not just what was wrong, but why it costs marks in a project rubric or fails in production. Step by step. No skipping.

Plan: At the end of each session, the next topic is named. If you’re covering Structured Streaming this week, Delta Lake MERGE operations are queued for next. The tutor tracks the sequence so you don’t have to.

Sessions run over Google Meet with a digital pen-pad or iPad and Apple Pencil. Before your first session, have your course outline or project brief, the error or question you’re currently blocked on, and your deadline date. The first session starts with a 10-minute diagnostic so every subsequent minute is targeted. Start with the $1 trial — 30 minutes of live tutoring that also serves as your first diagnostic.

Students consistently tell us that the moment PySpark clicks is when they stop thinking of it as “Python that runs on a cluster” and start thinking in terms of distributed execution plans. That shift usually happens within two or three sessions.

Tutor Match Criteria (How We Pick Your Tutor)

Not every data engineer makes a good PySpark tutor. MEB vets on four dimensions.

Subject depth: tutors demonstrate working knowledge of Spark internals — not just the DataFrame API but execution planning, shuffle mechanics, and MLlib architecture. They’re matched to your level: coursework, certification prep, or research-grade pipelines.

Tools: all tutors use Google Meet with a digital pen-pad or iPad and Apple Pencil. Live annotation of execution plans is non-negotiable for this subject.

Time zone: matched to your region. US, UK, Gulf, Canada, and Australia are all covered, including late-night slots that most platforms don’t offer.

Goals: the tutor is briefed on whether you need to pass a certification exam, complete a university project, or build production-level pipeline skills. The approach differs.

Unlike platforms where you fill out a form and wait, MEB responds in under a minute, 24/7. Tutor match takes under an hour. The $1 trial means you test before you commit. Everything runs over WhatsApp — no logins, no intake forms.

Pricing Guide

Standard PySpark tutoring runs $20–$40/hr, covering most undergraduate and early graduate coursework. Rates reach $100/hr for highly specialised work: Spark cluster architecture, custom partitioner design, streaming at production scale, or Databricks certification fast-track prep.

Rate factors include your level, the complexity of the topic, your timeline, and tutor availability. For students targeting roles at data-intensive firms or Databricks/AWS certifications, tutors with professional data engineering backgrounds are available at higher rates — share your specific goal and MEB will match the tier to your ambition.

Availability tightens around university semester ends and certification exam windows. Start with the $1 trial — 30 minutes, no registration, no commitment. WhatsApp MEB for a quick quote.

MEB has been running since 2008 across 2,800+ subjects. 18 years. No registration forms. No waiting days for a match. WhatsApp and you’re in a session within the hour.

Source: My Engineering Buddy, 2008–2025.

Try your first session for $1 — 30 minutes of live 1:1 tutoring or one homework question explained in full. No registration. No commitment. WhatsApp MEB now and get matched within the hour.

FAQ

Is PySpark hard to learn?

PySpark is manageable if you already know Python. The hard part isn’t the syntax — it’s the mental shift to distributed execution. Students with solid Python and some SQL background typically need 8–15 hours of guided work to reach functional fluency with DataFrames and Spark SQL.

How many sessions will I need?

It depends on your starting point and goal. A student who understands Python and needs to complete a single university project can often close their gaps in 4–6 sessions. Certification prep for the Databricks Associate exam typically takes 10–15 sessions across 4–6 weeks.

Can you help with PySpark assignments and project work?

MEB tutoring is guided learning — you understand the work, then submit it yourself. See our Academic Integrity policy and Why MEB page for full details on what we help with and what we don’t. The tutor explains the logic; you write and submit the code.

Will the tutor match my exact syllabus or exam board?

Yes. Share your course outline, university module guide, or certification exam blueprint during onboarding. The tutor is briefed before the first session. If your programme uses a specific Databricks or Spark version, the tutor matches that environment.

What happens in the first session?

The tutor runs a 10-minute diagnostic — usually by reviewing a piece of your existing code or walking through a concept you’ve flagged as a gap. The remaining 20 minutes address your most urgent blocker. The session ends with a mapped plan for what follows.

Is online PySpark tutoring as effective as in-person?

For a technical subject like PySpark, online is often better. Screen sharing, live notebook editing, DAG annotation on a digital pen-pad, and instant code execution are all possible in a remote session. No whiteboard in a campus room can match that workflow.

What’s the difference between PySpark and regular Apache Spark?

Apache Spark is the underlying distributed engine, written in Scala. PySpark is its Python API. Most data science coursework and many engineering roles use PySpark because Python is the dominant language in that ecosystem. Performance is near-identical for DataFrame and SQL workloads.

Can I get help with Structured Streaming or Delta Lake specifically?

Yes. Both are covered under the MEB PySpark curriculum. Structured Streaming — watermarking, trigger modes, output sinks — and Delta Lake MERGE, time travel, and schema evolution are topics MEB tutors work through regularly with graduate and professional students.

Do you help with PySpark on Databricks specifically?

Yes. Many students and professionals work exclusively in Databricks notebooks. MEB tutors are familiar with Databricks-specific features including Unity Catalog, Databricks Repos, and the Databricks ML Runtime. Share which environment you’re using when you make contact.

Can I get PySpark help at midnight or on weekends?

Yes. MEB operates 24/7. Tutors are available across time zones, including late-night slots for US and Gulf students. WhatsApp MEB at any hour — average response time is under a minute. The $1 trial has no time restrictions.

How do I get started?

Three steps: WhatsApp MEB with your subject, your current blocker, and your deadline. MEB matches you with a verified tutor — usually within an hour. Your first session starts with a diagnostic and costs $1 for 30 minutes of live tutoring or one full question explained.

Trust & Quality at My Engineering Buddy

MEB tutors go through a multi-stage screening process: subject knowledge assessment, a live demo session evaluated by a senior tutor, and ongoing review based on student feedback. Every PySpark tutor has hands-on experience with distributed data systems — not just academic knowledge of the API. Rated 4.8/5 across 40,000+ verified reviews on Google.

MEB tutoring is guided learning — you understand the work, then submit it yourself. For full details on what we help with and what we don’t, read our Academic Integrity policy and Why MEB.

MEB has served 52,000+ students across the US, UK, Canada, Australia, the Gulf, and Europe since 2008, covering 2,800+ subjects. Data Science is one of MEB’s strongest subject areas — tutors supporting students in data cleaning, sentiment analysis, and informatics are active on the platform daily. See our tutoring methodology for more on how sessions are structured and how tutors are managed.

A common pattern our tutors observe is that students who’ve taken an online Spark course know the vocabulary but can’t debug a real job. Tutoring closes that gap fast — because real code breaks in ways that course videos never show.

Explore Related Subjects

Students studying PySpark often also need support in:

Next Steps

Before your first session, have ready:

- Your course outline, module guide, or certification exam blueprint

- A recent piece of code you’re stuck on, or an assignment you struggled with

- Your project submission or exam date

The tutor handles the rest. Share your availability and time zone over WhatsApp and MEB matches you with a verified PySpark tutor — usually within 24 hours. The first session starts with a diagnostic so every minute is targeted.

Visit www.myengineeringbuddy.com for more on how MEB works.

WhatsApp to get started or email meb@myengineeringbuddy.com.

Reviewed by Subject Expert

This page has been carefully reviewed and validated by our subject expert to ensure accuracy and relevance.