Hire Verified & Experienced

Reinforcement Learning Tutors

4.8/5 40K+ session ratings collected on the MEB platform

Hire The Best Reinforcement Learning Tutor

Top Tutors, Top Grades. Without The Stress!

52,000+ Happy Students From Various Universities

How Much For Private 1:1 Tutoring & Hw Help?

Private 1:1 Tutoring and HW help Cost $20 – 35 per hour* on average.

Most students hit a wall at the Bellman equation or policy gradient derivations — and no YouTube video fixes it at midnight before a deadline.

Reinforcement Learning Tutor Online

Reinforcement Learning (RL) is a branch of machine learning where an agent learns to make sequential decisions by interacting with an environment and receiving reward signals. It equips students to design, train, and evaluate RL agents for real-world decision-making problems.

MEB connects you with a 1:1 online Reinforcement Learning tutor — whether you’re working through Q-learning at the undergraduate level, implementing policy gradients for a graduate course, or tackling deep RL for a research project. Search for a Reinforcement Learning tutor near me and you’ll find generic platforms. MEB gives you a verified expert matched to your exact course, your framework, and your deadline.

- 1:1 online sessions tailored to your course syllabus and specific RL framework

- Expert verified tutors with graduate-level subject knowledge in RL theory and implementation

- Flexible time zones — US, UK, Canada, Australia, Gulf, Europe

- Structured learning plan built after a diagnostic session on your current gaps

- Ethical homework and assignment guidance — you understand the work before you submit

52,000+ students across the US, UK, Canada, Australia, and the Gulf have used MEB since 2008 — across 2,800+ subjects, from AP Calculus to A Level Music Technology to Data Science.

Source: My Engineering Buddy, 2008–2025.

How Much Does a Reinforcement Learning Tutor Cost?

Most RL tutoring sessions with MEB run $20–$40/hr. Graduate-level or research-focused sessions — covering topics like multi-agent RL, model-based methods, or custom reward shaping — may reach up to $100/hr depending on tutor depth and timeline. You can start with a $1 trial: 30 minutes of live 1:1 tutoring or one full homework question explained.

| Level / Need | Typical Rate | What’s Included |

|---|---|---|

| Undergraduate / Standard | $20–$35/hr | 1:1 sessions, homework guidance, worked examples |

| Graduate / Specialist | $35–$100/hr | Expert tutor, deep RL, research-level support |

| $1 Trial | $1 flat | 30 min live session or one full homework question |

Tutor availability tightens around end-of-semester project deadlines and exam periods — especially May and December. Book early if your timeline is tight.

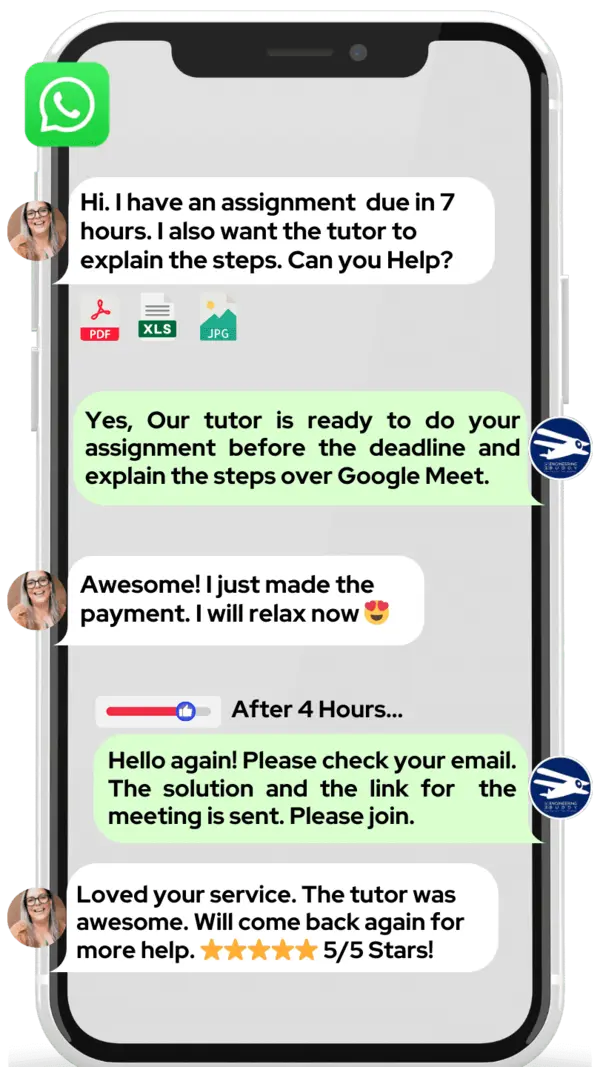

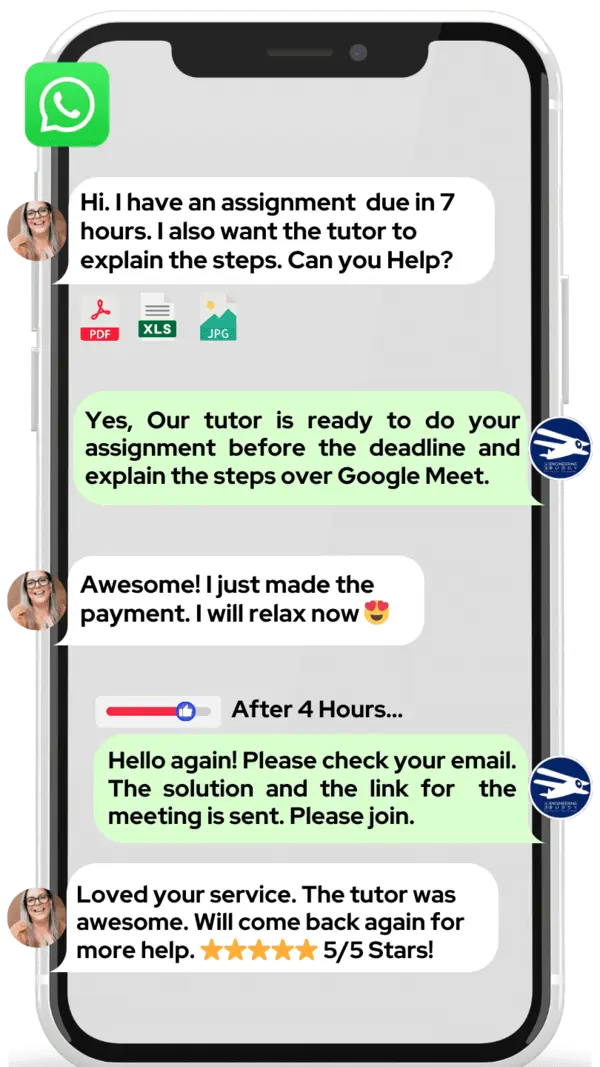

WhatsApp MEB for a quick quote — average response time under 1 minute.

Who This Reinforcement Learning Tutoring Is For

RL sits at the harder end of machine learning coursework. Students often arrive knowing the theory in outline but struggling the moment implementation or proof-based exams demand precision. If that’s where you are, this is built for you.

- Undergraduate students taking an AI or ML course that covers Markov Decision Processes, Q-learning, or policy-based methods

- Graduate students working through deep RL, actor-critic architectures, or multi-agent systems for coursework or research

- Students with a university conditional offer depending on passing this course — one grade matters

- PhD candidates needing support with RL theory in a dissertation or implementing custom RL environments

- Students who need machine learning tutoring alongside RL to fill foundational gaps

- Parents supporting a student through an AI or data science degree who want progress tracked and sessions structured

Students come from programmes at institutions including MIT, Carnegie Mellon, Stanford, Imperial College London, the University of Toronto, ETH Zurich, and the University of Sydney — among many others.

1:1 Tutoring vs Self-Study vs AI Tools

Self-study works for motivated students — but RL has a deceptive quality: you can read a chapter on temporal difference learning, believe you’ve understood it, and then fail to implement it correctly three times in a row without knowing why. AI tools are fast for generating code snippets or explaining individual concepts, but they cannot watch you derive the Bellman optimality equation, catch the exact step where your reasoning breaks, and correct it live. They don’t know whether you’ve confused on-policy and off-policy methods in your specific assignment context. MEB gives you an expert who can do exactly that — working in your framework, on your course material, in real time with a digital pen-pad — while keeping sessions structured so progress is measurable, not accidental.

Outcomes: What You’ll Be Able To Do in Reinforcement Learning

After working with an MEB Reinforcement Learning tutor, students consistently report sharper clarity on both theory and code. You’ll be able to model sequential decision problems as Markov Decision Processes and prove convergence properties from first principles. You’ll apply Q-learning and SARSA correctly to grid-world and continuous-state environments. You’ll implement policy gradient methods — including REINFORCE and PPO — without confusing the objective function. You’ll analyse reward shaping choices and explain their effect on agent behaviour in written assignments or oral exams. You’ll present your RL project findings clearly, whether that’s a trained OpenAI Gym agent or a custom environment built in Python.

Based on feedback from 40,000+ sessions collected by MEB from 2022 to 2025, 58% of students improved by one full grade after approximately 20 hours of 1:1 tutoring in a single subject. A further 23% achieved at least a half-grade improvement.

Source: MEB session feedback data, 2022–2025.

What We Cover in Reinforcement Learning (Syllabus / Topics)

Foundations: MDPs, Dynamic Programming, and Temporal Difference Learning

- Markov Decision Processes — states, actions, transition probabilities, reward functions

- Bellman equations — expectation and optimality forms, derivation and application

- Policy evaluation, policy improvement, and policy iteration

- Value iteration and convergence proofs

- Temporal difference learning — TD(0), TD(λ), eligibility traces

- On-policy vs off-policy methods — SARSA vs Q-learning distinctions

Core texts for this track include Sutton & Barto’s Reinforcement Learning: An Introduction (2nd ed.) and Szepesvári’s Algorithms for Reinforcement Learning.

Policy Gradient Methods and Actor-Critic Architectures

- REINFORCE algorithm — gradient estimation, variance reduction techniques

- Baseline subtraction and advantage functions

- Actor-critic methods — A2C, A3C architectures

- Proximal Policy Optimization (PPO) — clipped objective, implementation details

- Trust Region Policy Optimization (TRPO) — theory and practical constraints

- Deterministic policy gradients — DDPG and its extensions

Recommended texts include Goodfellow, Bengio & Courville’s Deep Learning and Bertsekas’s Reinforcement Learning and Optimal Control.

Deep RL, Multi-Agent Systems, and Real-World Applications

- Deep Q-Networks (DQN) — experience replay, target networks, dueling architectures

- Model-based RL — Dyna-Q, world models, and sample efficiency

- Exploration strategies — epsilon-greedy, UCB, Thompson sampling, curiosity-driven

- Multi-agent RL — cooperative, competitive, and mixed-motive settings

- Reward shaping, sparse rewards, and inverse RL

- RL environments — OpenAI Gym, MuJoCo, custom Python environments

- RL for robotics, game playing, and sequential recommendation systems

Students in this track frequently reference Mnih et al. (DQN paper), Silver et al. (AlphaGo), and supporting material from deep learning tutoring resources.

Platforms, Tools & Textbooks We Support

Reinforcement Learning coursework is almost always implementation-heavy. MEB tutors work across the tools your course actually uses — no generic support, no guessing.

- Python (NumPy, PyTorch, TensorFlow, JAX)

- OpenAI Gym and Gymnasium environments

- Stable Baselines3 and RLlib

- MuJoCo and PyBullet for continuous control

- Jupyter Notebooks and Google Colab

- MATLAB (for control-theoretic RL formulations)

- Custom environment building and debugging in Python

What a Typical Reinforcement Learning Session Looks Like

The tutor opens by checking where you got stuck last time — often a policy gradient derivation or a broken training loop in PyTorch. From there, you work through the problem together on screen: the tutor uses a digital pen-pad to annotate the Bellman update step or sketch the actor-critic flow while you follow along and ask questions in real time. Then you take over — you rework the derivation or modify the code while the tutor watches and corrects immediately, not after. The session closes with a specific task: implement DQN on CartPole with experience replay, or rewrite the TD update for a new environment. The next topic is noted so you start the following session without spending ten minutes recapping.

How MEB Tutors Help You with Reinforcement Learning (The Learning Loop)

Diagnose: In the first session, the tutor runs through a short set of targeted questions — not a generic quiz, but specific probes around your course material. They find out whether your gap is in MDP formulation, implementation confidence, or exam technique under time pressure.

Explain: The tutor works through the problem live using a digital pen-pad. For RL, that typically means annotating the Bellman equation step by step, tracing through a Q-table update with actual numbers, or walking through a policy gradient proof while you watch and interrupt.

Practice: You attempt the next problem with the tutor present — not after the session. This is the part that changes outcomes. Silent self-study after a session doesn’t catch the moment your reasoning goes wrong.

Feedback: The tutor corrects errors at the exact step they occur, explains why that step costs marks in an exam context, and shows the correct path before moving on. No vague “try again” — every correction is specific.

Plan: Each session ends with a clear next topic and a concrete task. The tutor tracks what you’ve covered and adjusts the sequence if your exam date shifts or a new assignment topic appears.

Sessions run over Google Meet. The tutor uses a digital pen-pad or iPad with Apple Pencil for live annotation. Before your first session, share your course syllabus or assignment sheet, a recent attempt at a problem you struggled with, and your exam or project deadline. The first session covers your diagnostic and the highest-priority gap. Start with the $1 trial — 30 minutes of live tutoring that also serves as your first diagnostic.

At MEB, we’ve found that students who struggle with Reinforcement Learning almost never have a motivation problem — they have a feedback gap. They’ve read the material, watched the lectures, and still can’t close the loop between theory and implementation. A single session with the right correction at the right moment changes that faster than most students expect.

Tutor Match Criteria (How We Pick Your Tutor)

Not every ML expert is the right fit for RL. Here’s what MEB checks before matching you.

Subject depth: Tutors are vetted at the level you’re studying — undergraduate RL theory, graduate deep RL, or research-level multi-agent systems. A tutor who knows PyTorch but can’t derive the policy gradient theorem won’t be sent to a PhD student.

Tools: Every session runs on Google Meet with digital pen-pad or iPad and Apple Pencil annotation. For coding-heavy sessions, screen sharing and live code review are standard.

Time zone: MEB covers New York, Los Angeles, Chicago, London, Dubai, Toronto, Sydney, Melbourne, and all major European time zones — evenings and weekends included.

Learning style: Calibrated from the first session. Some students need proof-heavy derivations slowed down; others need faster-paced implementation walkthroughs. The tutor adjusts after the first 15 minutes.

Communication: Clear English adapted to your level. No assuming prior knowledge that hasn’t been confirmed. No rushing past the step that lost you.

Goals: Whether you need to pass a specific exam, complete a course project, or develop RL depth for a dissertation — the tutor is matched to that goal, not a generic syllabus.

Unlike platforms where you fill out a form and wait, MEB responds in under a minute, 24/7. Tutor match takes under an hour. The $1 trial means you test before you commit. Everything runs over WhatsApp — no logins, no intake forms.

Students consistently tell us that the match matters as much as the subject knowledge. A tutor who knows deep RL but can’t explain the intuition behind the advantage function to a first-year master’s student is the wrong tutor — regardless of their CV. MEB’s matching process accounts for that.

Study Plans (Pick One That Matches Your Goal)

Your tutor builds the exact session sequence after the diagnostic — but here are the three structures most RL students use. Catch-up (1–3 weeks): intensive focus on the highest-priority gaps before an exam or project deadline, typically 3–5 sessions per week. Exam prep (4–8 weeks): structured coverage of all major RL topics aligned to your paper, with past-problem practice built in. Weekly support: one or two sessions per week running alongside your lectures and assignments through the semester — the most common choice for graduate students.

Pricing Guide

Standard RL tutoring runs $20–$40/hr. Graduate-level sessions covering deep RL, multi-agent theory, or dissertation support go up to $100/hr depending on tutor expertise and your timeline.

Rate factors include: course level, specific topic complexity (model-based RL commands a higher rate than intro Q-learning), how quickly you need a match, and tutor availability. Sessions close to end-of-semester project deadlines fill fast.

For students targeting top AI research programmes or roles at major tech companies, tutors with active research or industry backgrounds in RL are available at higher rates — share your specific goal and MEB will match the tier to your ambition.

Start with the $1 trial — 30 minutes, no registration, no commitment. WhatsApp MEB for a quick quote.

MEB has served students from over 50 countries since 2008, covering 2,800+ advanced subjects — from undergraduate AI courses to PhD-level research support in machine learning and beyond.

Source: My Engineering Buddy, 2008–2025.

FAQ

Is Reinforcement Learning hard?

Yes — RL is widely considered one of the harder areas of machine learning. The gap between reading about Q-learning and implementing it correctly is significant. Policy gradient derivations, convergence proofs, and debugging training instability all require more than self-study to master reliably.

How many sessions do most students need?

Students catching up before an exam typically need 6–10 focused sessions. Those working through a full RL course over a semester average one to two sessions per week. Your tutor gives a clearer estimate after the first diagnostic session, once your specific gaps are mapped.

Can you help with homework and assignments?

Yes — MEB tutors explain concepts, walk through problem-solving approaches, and help you understand the material so you can complete and submit your own work. For full details on what MEB helps with and what it doesn’t, read our Academic Integrity policy and Why MEB.

Will the tutor match my exact syllabus or exam board?

Yes. Before matching you, MEB confirms the course name, institution, and specific topics you’re working on. Tutors are selected based on that — not just general RL knowledge. If your course uses a specific textbook or framework, share it when you message.

What happens in the first session?

The tutor runs a short diagnostic — targeted questions around your current course material — to find your highest-priority gap. From there, the session moves directly into that topic. No time wasted on material you already know. The session also sets the plan for what follows.

Is online tutoring as effective as in-person?

For RL specifically, online sessions work extremely well. Live annotation over Google Meet with a digital pen-pad closely replicates whiteboard teaching. Screen sharing for code review is more immediate online than in-person. Most MEB students see measurable progress within their first two or three sessions.

Can I get Reinforcement Learning help at midnight?

Yes. MEB operates 24/7. Students in US, UK, Gulf, and Australian time zones all message outside standard hours. Tutors are available evenings and weekends. If you’re on a deadline and need a session tonight, WhatsApp MEB and the team will find availability within the hour.

What if I don’t like my assigned tutor?

Tell MEB — no questions, no forms. You’ll be rematched with a different tutor, usually within a few hours. The $1 trial exists partly for this reason: it’s low-risk by design. Most students confirm their tutor after the first session, but changing is always an option.

Do you offer group Reinforcement Learning sessions?

MEB focuses on 1:1 tutoring. If you’re studying with a classmate and want to split session time, mention that when you message — MEB can advise on whether that suits your goals. Individual sessions consistently outperform group formats for closing specific RL gaps quickly.

How do I get started?

Three steps: WhatsApp MEB with your course name and topic, get matched with a verified RL tutor within the hour, then start your $1 trial — 30 minutes of live 1:1 tutoring or one full homework question explained in detail. No registration required, no commitment beyond the dollar.

Trust & Quality at My Engineering Buddy

Every MEB tutor goes through a structured screening process: subject-knowledge assessment, a live demo session evaluated by a subject lead, and ongoing review based on student feedback. Tutors hold advanced degrees in their fields — most at master’s or PhD level — and many bring active research or industry experience in AI and machine learning. Rated 4.8/5 across 40,000+ verified reviews on Google.

MEB tutoring is guided learning — you understand the work, then submit it yourself. For full details on what we help with and what we don’t, read our Academic Integrity policy and Why MEB. We guide — you submit your own work.

MEB has been serving students across the US, UK, Canada, Australia, the Gulf, and Europe since 2008 — 52,000+ students across 2,800+ subjects. Whether you need help with neural networks tutoring, machine learning help, or 1:1 deep learning tutoring, MEB has a verified tutor ready. Read more about our approach at our tutoring methodology.

MEB tutors are vetted through live demo sessions, subject-knowledge assessments, and ongoing student feedback review — not just a CV check. Every tutor matched to an RL student has demonstrated live problem-solving ability in the subject.

Source: My Engineering Buddy, tutor vetting process documentation.

Try your first session for $1 — 30 minutes of live 1:1 tutoring or one homework question explained in full. No registration. No commitment. WhatsApp MEB now and get matched within the hour.

Explore Related Subjects

Students studying Reinforcement Learning often also need support in:

- Machine Learning

- Deep Learning

- Neural Networks

- Probabilistic Graphical Models

- Computer Vision

- Natural Language Processing (NLP)

- Pattern Recognition

Next Steps

Getting started takes under five minutes.

- Share your course name, exam board or institution, the topic giving you the most trouble, and your exam or project deadline

- Share your availability and time zone — MEB covers all major US, UK, Gulf, and Australian time zones, evenings and weekends

- MEB matches you with a verified Reinforcement Learning tutor — usually within the hour

- Your first session starts with a diagnostic so every minute is used on what actually needs work

Before your first session, have ready:

- Your course syllabus or assignment sheet

- A recent past paper attempt or homework problem you struggled with

- Your exam date or project submission deadline

The tutor handles the rest. Visit www.myengineeringbuddy.com for more on how MEB works.

WhatsApp to get started or email meb@myengineeringbuddy.com.

Reviewed by Subject Expert

This page has been carefully reviewed and validated by our subject expert to ensure accuracy and relevance.