STEM education has changed rapidly with the rise of AI tools. What started as basic support apps has evolved into powerful platforms that can write reports, solve complex equations, and even imitate a student’s writing style with striking accuracy.

This shift goes beyond technology. It directly affects how students learn. When AI does the thinking, students skip the process that builds reasoning, logic, and problem-solving skills. In engineering and other STEM disciplines, where understanding how a solution is reached matters as much as the answer itself, this becomes a serious concern.

That is why detecting AI content in STEM education matters. It helps tutors and educators identify when learning is being replaced by shortcuts, allowing them to step in early, guide students properly, and maintain academic standards. As AI becomes more common, the ability to detect AI-generated work is no longer optional it is essential.

What AI Detection Tools Are Doing and Why It Matters for Tutors

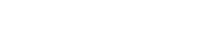

AI detection tools examine sentence structure, predictability, syntax patterns, and stylistic consistency to flag content that may be machine-generated. These tools can provide quick indicators, but they are not definitive proof.

Recent studies suggest that over 60% of higher-education students have used AI tools for coursework at least once, often without fully understanding the academic implications. At the same time, research from academic integrity bodies shows that automated detection tools alone can produce false positives, especially when students improve rapidly or follow structured writing formats.

For STEM tutors, this means AI detection tools should act as signals, not verdicts. Human judgment grounded in subject expertise and familiarity with student learning patterns remains the most reliable way to protect academic integrity.

While software can flag potential issues, it lacks the nuance to understand why a student wrote something. The table below outlines the fundamental difference between algorithmic detection and human insight.

Software identifies patterns, but only human tutors can verify true understanding of the material.

By understanding these distinct roles, tutors can use detection tools as a preliminary signal rather than a final verdict.

How Tutors Can Detect AI Without Relying on Software

Experienced educators often notice issues before any tool does. Common signs include:

- Sudden jumps in writing quality or formatting that do not match earlier work

- Correct final answers with missing steps, reasoning, or explanations

- Overly generic solutions that fail to reference course-specific methods

- Technical terms used correctly but without contextual understanding

- Repeated phrasing patterns across unrelated assignments

These patterns often manifest as distinct warning signs in the submitted work. Here is a visual checklist of the four most common ‘red flags’ that indicate a student may be bypassing the learning process.

Watch for these four common indicators that a student might be relying on AI instead of their own reasoning.

If you observe a combination of these signs, it is a strong indicator that you need to probe deeper into the student’s reasoning.

For example, a student may submit a perfectly solved circuit analysis but struggle to explain why Kirchhoff’s law was applied at a particular step. In STEM learning, that gap between result and reasoning is a strong indicator that deeper understanding may be missing.

Tutors who want to stay ahead can explore tools designed for detecting AI content, while continuing to trust their own experience and insight into how genuine student work looks and sounds.

The Long-Term Impact on Students and STEM Learning

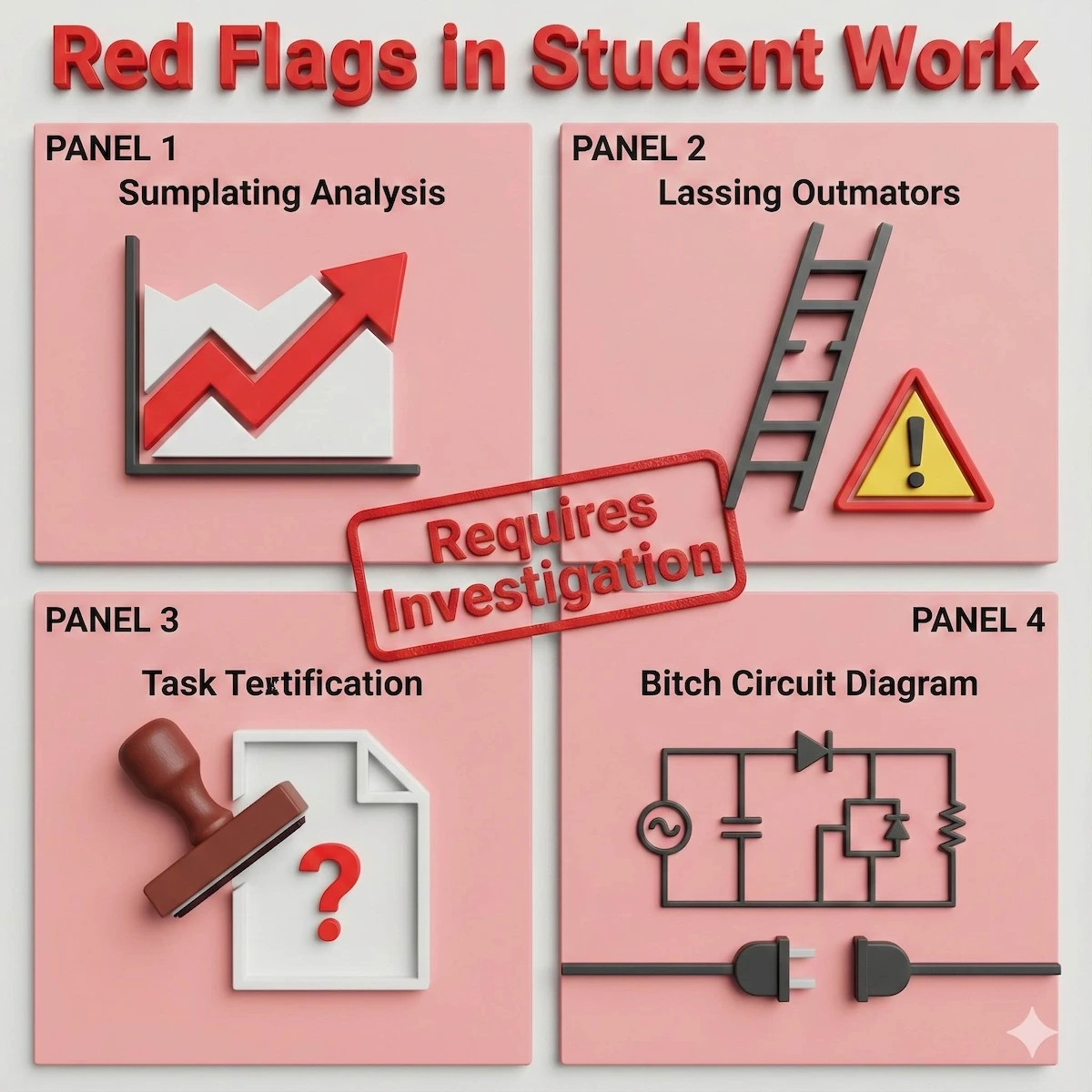

Overreliance on AI does not always show immediate consequences. Assignments get submitted. Grades may even look fine. But the long-term effects become clear over time.

-

Loss of problem-solving skills

Problem-solving in STEM requires repeated struggle. Without working through errors and false starts, students fail to build resilience and analytical depth.

-

Struggles in exams and interviews

In time-pressured exams, labs, or technical interviews, AI tools are unavailable. Students who have not practiced independent reasoning often freeze when faced with unfamiliar problems.

-

Inability to explain solutions

Producing a polished answer is easier than explaining the logic behind it. Many students struggle to articulate their thinking when questioned, especially in professional settings.

-

Declining confidence

As dependence grows, students begin to believe they cannot succeed without AI assistance. This erosion of self-trust can persist even when they actually understand the material.

This is not about catching students doing something wrong. It is about helping them avoid habits that quietly undermine their growth before the damage becomes visible.

The danger isn’t just about a single graded assignment; it is about the psychological habit being formed. This vicious cycle demonstrates how reliance on AI slowly erodes a student’s confidence and capability.

Overreliance on AI creates a cycle that slowly erodes a student’s confidence and problem-solving resilience.

Breaking this cycle early is crucial. Once a student loses the ‘muscle memory’ for problem-solving, rebuilding that self-trust becomes significantly harder.

How Tutors Can Keep Students on the Right Path

AI is here to stay. Students will use it sometimes without realizing the consequences. The goal is not punishment, but guidance.

When something feels off:

- Ask students to explain their approach

- Walk through their reasoning step by step

- Use AI detection tools if needed

- Keep the conversation open and non-accusatory

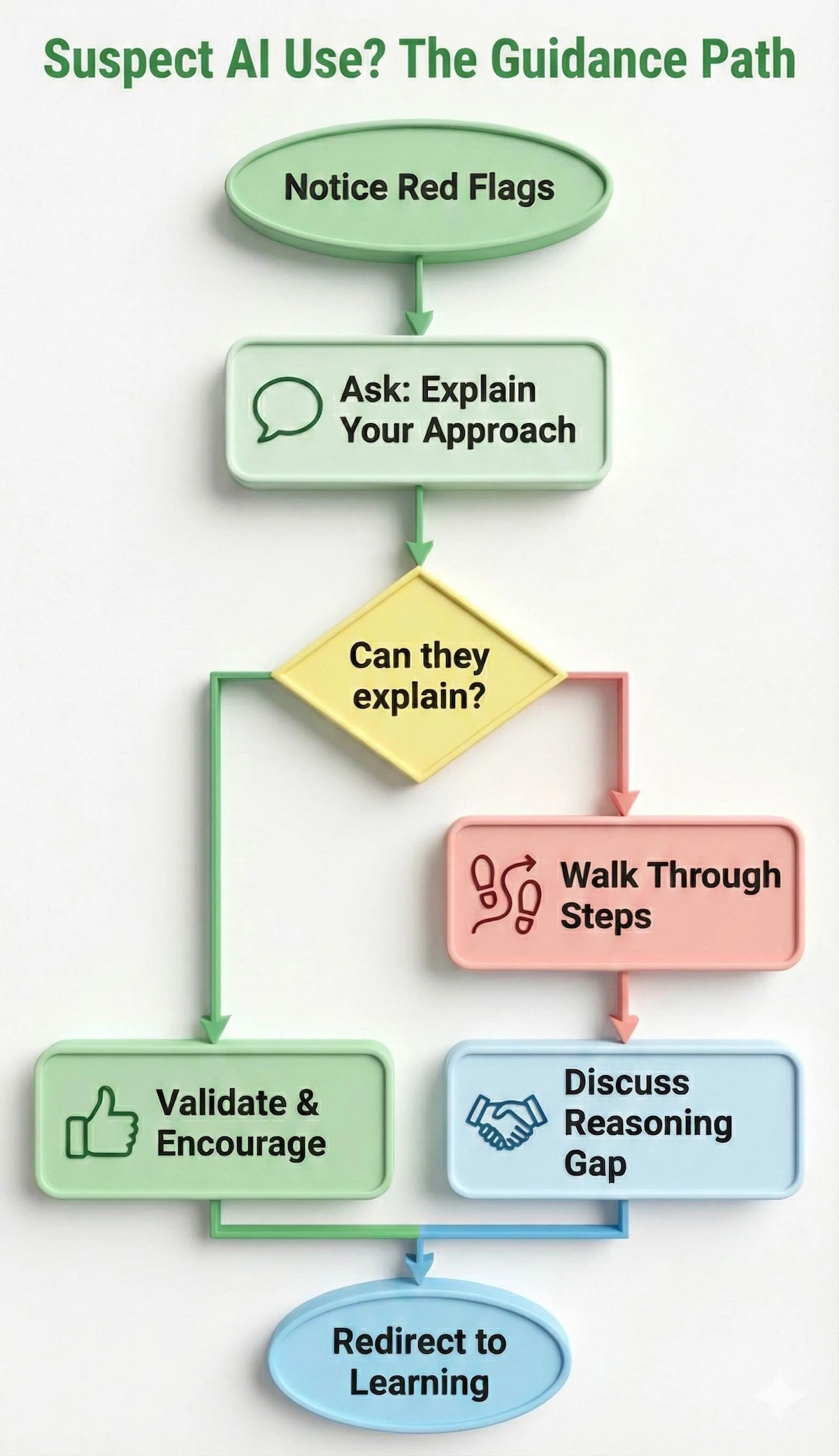

Approaching a student about suspected AI use can be delicate. To ensure the conversation remains constructive rather than confrontational, follow this decision workflow.

A simple workflow to turn suspected AI use into a productive learning moment.

By following this path, you shift the focus from ‘catching a cheater’ to identifying a ‘reasoning gap,’ which turns a potential conflict into a valuable teaching moment.

Often, a simple discussion is enough to redirect a student back toward genuine learning. Early intervention makes all the difference.

Why This Matters Beyond the Classroom

STEM education is not just about mastering formulas or tools. It trains students how to think.

In real-world engineering, research, and technical roles, professionals must explain decisions, test assumptions, and solve problems that do not have predefined answers. Students who skip the learning process carry that weakness into their careers.

Detecting AI content in STEM education helps preserve the thinking habits students need long after their coursework ends.

Final Thoughts

Artificial intelligence can support learning, but it cannot replace real effort, reasoning, and understanding. In STEM education, the learning process matters as much as the outcome often more.

Tutors play a critical role in protecting that process. By staying alert, asking the right questions, and guiding students thoughtfully, educators help rebuild habits that lead to lasting growth. This is not about policing behavior. It is about ensuring students learn to think independently and confidently.

FAQs on Detecting AI Content in STEM Education

Is using AI always considered academic misconduct?

No. Responsible AI use for brainstorming or clarification may be acceptable, depending on institutional guidelines. Problems arise when AI replaces independent thinking.

Are AI detection tools always accurate?

No. They can provide indicators but may produce false positives. Human review is essential.

Should tutors completely ban AI tools?

Not necessarily. Teaching students how to use AI responsibly is often more effective than banning it outright.

How can tutors address suspected AI use without accusing students?

By asking students to explain their reasoning and process. Genuine understanding becomes clear through conversation.

Why is detecting AI content especially important in STEM subjects?

Because STEM learning depends heavily on reasoning, problem-solving, and process—not just final answers.

******************************

This article provides general educational guidance only. It is NOT official exam policy, professional academic advice, or guaranteed results. Always verify information with your school, official exam boards (College Board, Cambridge, IB), or qualified professionals before making decisions. Read Full Policies & Disclaimer , Contact Us To Report An Error